An AI content risk and compliance strategy lets you spot the red flags and do what it takes to turn them all green.

It’s easy to overlook risk and compliance amid the rush to gain AI’s productivity and efficiency benefits, but many senior executives are realizing they do so at their peril. Per research from the Conference Board, 72% of U.S. companies now flag AI as a material risk in their public disclosures, up from just 12% two years ago.

Part of the problem is that advancements in AI, like those in most technologies, are happening far more quickly than compliance frameworks. The word “risk” encompasses everything from cyberattacks to reputational hits, and it’s nearly impossible to address them all at once.

“The risks aren’t theoretical anymore,” says Tim Ahlenius, vice-president of strategic initiatives at WordPress VIP partner Amercianeagle.com in Des Plaines, Ill. “They show up in conversations during sales and client engagements regularly.”

AI governance for content is a good place to start because content represents invaluable intellectual property. It’s how the market understands who your brand is and what it stands for. It helps drive prospects to convert into paying customers. It’s what you use to educate everyone about your policies and processes, and to build relationships.

When content produced or assisted by AI isn’t properly managed, organizations can face negative media coverage, regulatory fines, lawsuits, and lost revenue. Although you’ll still want to be able to experiment with AI platforms and tools, AI content risk management will provide an important safety net.

The core risk categories of AI-generated content

Enterprise AI compliance needs to span multiple areas of risk, given the variety of content marketing and other departments publish on a daily basis. One of your first steps should be to convene the appropriate internal stakeholders to review your use of AI and its potential impact on the following areas:

Legal and IP risk

“This is usually the first executive concern,” Tim says. “Questions around copyright ownership of AI-generated content, training data security and privacy, and the risk of unintentionally reproducing protected material have all been brought up.”

The concerns likely stem from the fact that brands are not only drawing upon their own source data to inspire campaigns and other assets. Many AI tools are based on large language models (LLMs) that also draw upon publicly available online data to produce social media copy, blog posts, images, and video. Without proper permissions, you could run afoul of copyright and ownership laws.

“There’s still ambiguity in case law, and brands don’t want to be the test case. We’re also seeing more scrutiny around who ‘owns’ AI-assisted outputs, especially in industries where content is core intellectual property. The uncertainty isn’t necessarily stopping innovation, but it is slowing deployment in regulated and large content organizations.”

— Tim Ahlenius, Vice-President of Strategic Initiatives, Amercianeagle.com in Des Plaines, Ill

Regulatory and compliance risk

If you’re doing any business with the European Union, you need any AI-produced or assisted content to align with privacy legislation such as GDPR. Financial services firms have to ensure they don’t hide fees in fine print, while technology and media firms need to consider child protection legislation such as COPPA

“For healthcare, financial services, insurance, higher ed, and other regulated industries, the bar is much higher,” Tim says. “AI-generated content can’t just be ‘mostly right.’ It has to align with FDA, SEC, ADA accessibility requirements, state-level privacy laws, and increasingly, global AI governance standards.

As a result, Americaneagle.com is hearing concerns about claims accuracy, required disclaimers when AI is used, accessibility compliance (especially WCAG), and data privacy risks when prompts include sensitive information.

Brand and reputational risk

Remember those voice and tone guidelines the team painstakingly developed? AI tools need to be as up to date on them as your best employees are. Failure to do so could mean you publish content that’s inaccurate or biased, which erodes trust — the opposite of what brand marketing is supposed to do.

“Hallucinated facts are the most immediate risk, along with subtle bias, tone misalignment, and outdated information being presented confidently,” Tim says. “What makes AI powerful is also what makes it risky, such as when it generates authoritative-sounding content at scale.”

If governance isn’t in place, you can scale inconsistency just as fast as you scale productivity, he added. “We’ve seen clients worry less about ‘is AI allowed?’ and more about “what happens when something wrong gets published?”

Operational risk

You might have officially authorized some AI tools and platforms for employees to use, but others could be quietly deployed on the front lines. Shadow AI poses a huge risk because there’s no guarantee the AI tools staff self-select will adhere to your policies. This makes auditing AI outputs even harder when questions arise.

“This is the quiet one, but it’s growing fast. Shadow AI usage inside organizations is widespread. Marketing teams using tools without disclosure,” Tim says. “Sales teams pasting proprietary data into public models. Different departments are using different models with no standards.”

Without traceability, version control, or prompt governance, companies lose auditability, reproducibility, and clear lines of accountability, Tim adds. “In many organizations, the operational risk is now larger than the content risk itself.”

What compliance actually means for AI-assisted publishing

Responsible AI publishing isn’t just a necessary evil. A survey from PwC found it boosts ROI and efficiency, and 55% of business leaders report improvements in customer experience and innovation.

“The early experimentation and BYOAI or unmoderated approach has shifted toward structured governance for larger organizations.”

— Tim Ahlenius, Vice-President of Strategic Initiatives, Amercianeagle.com in Des Plaines, Ill

These are some of the initial steps to putting it in place:

1. Review policies, standards, and acceptable-use definitions

AI content risk and compliance don’t happen in a vacuum. You should already have policies and standards that stipulate how AI-produced or assisted content is labelled, what types of content are prohibited, and what level of human oversight is required in publishing workflows.

Tim notes most enterprise brands now have documented AI acceptable use policies, which typically cover:

- Approved tools

- Prohibited data types used within AI (PII, PHI, confidential information)

- Disclosure requirements of AI utilization

- Human in the loop review standards before publishing

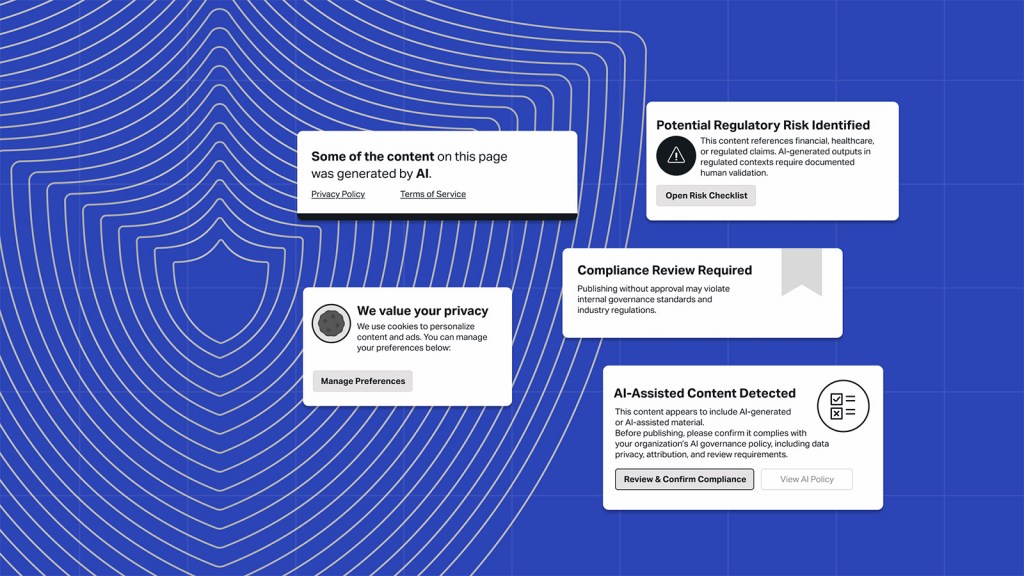

2. Develop (or enhance) disclosure and transparency requirements

A lot of AI work happens behind the scenes, and customers may not know how your content was produced. They expect and deserve to know, which is why disclosures should be included as a standard part of the publishing process. Transparency is even more important when customers engage with interactive content, such as a chatbot or virtual assistant.

This should be coupled with a focus on helping everyone on the team understand where AI fails, how bias can emerge, and when to escalate review, Tim says. That way, employees can get ahead of challenging issues customers might face.

“Interestingly, training has become a major mitigation strategy,” he says. “The risk often isn’t the model. It’s overconfidence in the model. Brands are investing in internal education.”

3. Bolster recordkeeping, audit trails, and documentation

AI can help produce quality content faster and easier than human beings working on their own, but it should never be at the expense of explainability and auditability. In other words, it should be easy to understand why an AI platform or tool produced a certain output, and to understand how it happened. This means logging source materials, prompts, decision trees, and version histories.

Tim says more sophisticated organizations are implementing:

- Prompt libraries with approved templates

- Version tracking of AI-assisted content

- Logging systems for traceability

“In regulated industries, auditability is becoming just as important as output quality,” he adds.

4. Maintain Human oversight and accountability models

There should always be people to stand behind the content AI helps you produce. Define exactly when and how staff should review and intervene in AI-powered content workflows, and what accountability entails for final approvals and sign-offs prior to publishing.

“We recommend implementing structured review layers,” Tim says. “AI-generated content does not go straight to production. Editorial oversight, legal review (where appropriate), and fact-checking workflows are built in.”

Governance models for managing AI content risk

Perhaps not surprisingly, many organizations are looking to AI to address AI risk management. A Moody’s report found more than 50% of organizations are currently using or trialing AI for risk and compliance functions. While more AI risk management solutions are bound to emerge, they should always be paired with compliance frameworks for AI-generated content.

Organizations that set up a dedicated AI council, for instance, might opt for a centralized AI governance approach. This means that only the people who belong to that group will approve LLMs and AI models, set policies, and monitor usage across content workflows.

The other option is a federated approach, in which AI governance for content is handled jointly by multiple teams or functions across the enterprise. This can make it easier to keep up with what’s happening on the front lines and to share responsibility for achieving and maintaining compliance.

Whichever route you take, make sure to define the role and scope of responsibilities for those in areas such as legal, IT, and sometimes even HR, as well as your content team. You’ll avoid duplication of effort and needless miscommunication as issues arise.

Your enterprise AI compliance efforts also need to go beyond policies to include employee training, especially on approval workflows and escalation paths.

How CMS platforms enable compliant AI workflows

Managing AI content risk and compliance doesn’t need to be a manual activity. A modern, enterprise-grade CMS will have built-in features and capabilities that can help, including:

- Role-based permissions and workflow controls: AI should be locked out of content workflows that are meant to be handled solely by humans based on the level of risk they involve. The same goes for employees: the ability to create, edit, or publish should be restricted to those with the proper level of authority, which is what a good CMS can do.

- Guardrails for AI use in content creation: The right alert at the right time can help organizations avoid high costs from regulatory fines and other negative outcomes. Using an analytics platform like Parse.ly, for instance, offers content intelligence to monitor for performance anomalies and unacceptable distribution patterns. If you’re worried about your content being scraped for inappropriate uses, WordPress VIP integrations like Tollbit can block or even charge AI crawlers.

- Tools for supporting audits, reporting, and continuous oversight: Look for a CMS with dashboards with detailed logging, version controls, and the ability to use metadata and taxonomies to classify content for easier auditability.

Striking the right balance between innovation and risk reduction

“The companies doing this well are not choosing between speed and safety. They’re designing systems that allow both.”

— Tim Ahlenius, Vice-President of Strategic Initiatives, Amercianeagle.com in Des Plaines, Ill

Here are Tim’s practical recommendations on how to strike the right balance:

Separate experimentation from production

Create sandbox environments where teams can explore freely while clearly outlining guardrails between experimentation and publishable content. Innovation thrives when teams aren’t afraid. Governance works when those guardrails are visible and understood.

Ground AI in authoritative data

“The most effective risk reduction strategy we see is grounding generative AI in structured, approved content through retrieval-based architectures,” he says.

Using your own trusted data. You dramatically reduce hallucination and compliance risk when AI responses are anchored in:

- Approved policy documents

- Verified product data

- Controlled knowledge bases

Establish tiered risk models

Not all content carries equal risk. A blog brainstorm session does not carry the same exposure as a regulated medical claim page. Smart organizations define risk tiers and apply review intensity in proportion to them. This keeps simple, low-risk work from being slowed down by too many rules, while still ensuring high-risk content is carefully reviewed and protected.

Design for transparency

Traceability, logging, and disclosure are becoming competitive advantages. Future regulation is likely to demand this anyway, so now is the time to get ahead of the curve. If something goes wrong, companies need to show:

- What tool was used

- What prompt was applied

- What human approvals occurred

Build AI literacy at the leadership level

Leaders don’t need to know all the technical details about how AI is built. But they do need to understand that AI gives answers based on patterns and probabilities, that it can make mistakes, and that it can sometimes show bias.

“The most successful companies are led by people who know that AI is a helpful tool that makes human work stronger, not something that should make important decisions on its own,” he says.

From risk avoidance to responsible AI operations

AI governance for content isn’t a task you can manage off the side of your desk. It needs to be embedded in everyday workflows, where the relevant teams can monitor the outcomes of AI decisions and adjust policies over time.

Taking a proactive stance on AI content risk management also prepares organizations for evolving regulations and changing expectations of customers, investors, and legislators. Your goal should be to use governance to unlock rather than limit AI’s value in your content operations.

A solid risk and compliance strategy will also help you get the green light to expand your AI use faster, knowing it will only enhance your brand’s trust and credibility with your audience.

“AI-generated content is not inherently risky; unstructured AI usage is. The real problem happens when people use AI without clear rules or guidance. The companies that will succeed aren’t the ones that avoided AI. They’re the ones that set clear rules early, made sure AI follows their brand standards and laws, and built it into their normal way of working.”

— Tim Ahlenius, Vice-President of Strategic Initiatives, Amercianeagle.com in Des Plaines, Ill

Author

Shane Schick

Founder, 360 Magazine

Shane Schick is a longtime technology journalist serving business leaders ranging from CIOs and CMOs to CEOs. His work has appeared in Yahoo Finance, the Globe & Mail and many other publications. Shane is currently the founder of a customer experience design publication called 360 Magazine. He lives in Toronto.